The Internet Was an Argument

I’m a PM in Azure Infra for IaaS. I don’t have a background in distributed systems. Most of the hard problems I run into at work are distributed computing problems, and this winter I took CS244C at Stanford to stop pattern-matching my way through them. The course is taught by Keith Winstein and David Mazières, two professors who helped build pieces of the internet and aren’t shy about telling you where the seams are.

I used paper-shepherd, a Claude Code plugin I built for reading academic papers using Keshav’s three-pass method, to work through most of the papers in this series.

This is Part 1 of 4.

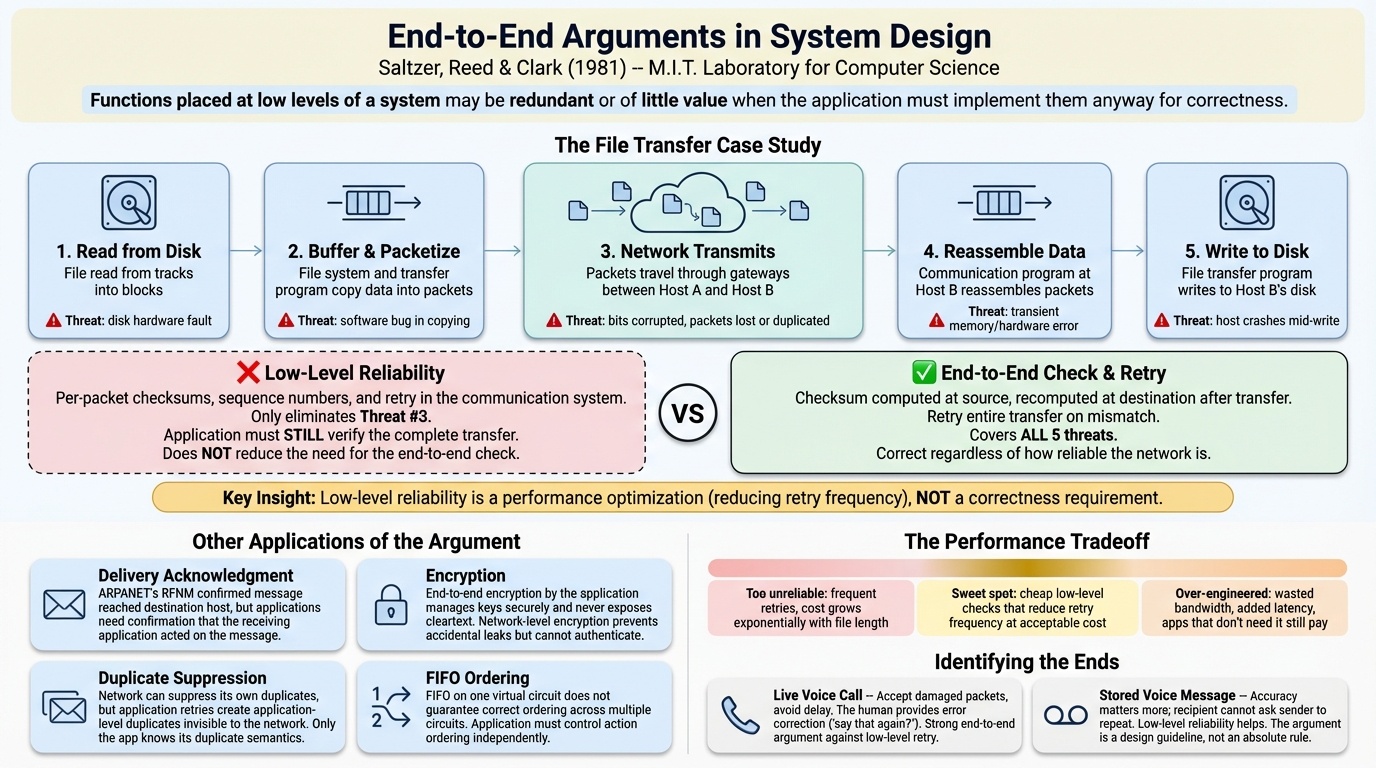

End-to-End Arguments in System Design

The first paper we read was Saltzer, Reed, and Clark’s “End-to-End Arguments in System Design” from 1981. A student in class called it “kind of like a blog post,” and Winstein agreed. He borrowed a term from his journalism days and called it “a scoop of ideas,” meaning someone noticed a pattern that was already happening and was the first to write it down and give it a name.

The argument is simple: don’t put intelligence in the network. The network should move bits. Everything else, error correction, reliability, encryption, ordering, belongs at the endpoints, because only the endpoints know what the application actually needs.

What’s easy to forget is that in 1981 this was not the consensus view. Winstein pulled a literal 1981 MIT lab catalog off his shelf and walked us through the world that produced this paper. Computer science was small. Jerry Saltzer, one of the authors, had built man pages, Runoff (which became the Unix text formatting tools), and Kerberos. But Winstein made a point that stuck with me: Saltzer’s real contribution was his students. David Clark and David Reed, the co-authors, were both Saltzer’s students. The ideas outlasted the systems.

As late as 1995, it was not obvious the internet would win. Networking textbooks from that era described a “smart network” built by telephone companies, ultra-reliable, with intelligence baked into the infrastructure, and then mentioned this other thing, a “stupid computer science thing called the Internet,” that would eventually need to be replaced by the official standard, OSI. By 2000, the stupid thing had won by default. Amazon, eBay, Google had all been built on the dumb network. The smart one never shipped.

I work on Azure Infra for IaaS, and what I keep coming back to with this paper is where we put intelligence in our own stack. The E2E argument says it belongs at the edges, either in the customer-facing layer or down at the node and host level. Not in the core control plane. I see this tension in platforms like Kubernetes, which tries to be smart about orchestration and allocation in its core logic rather than delegating up to the customer or down the stack. It’s not necessarily wrong, but it’s a choice, and reading this paper made me more aware of when we’re making that choice and when we’re making it by accident.

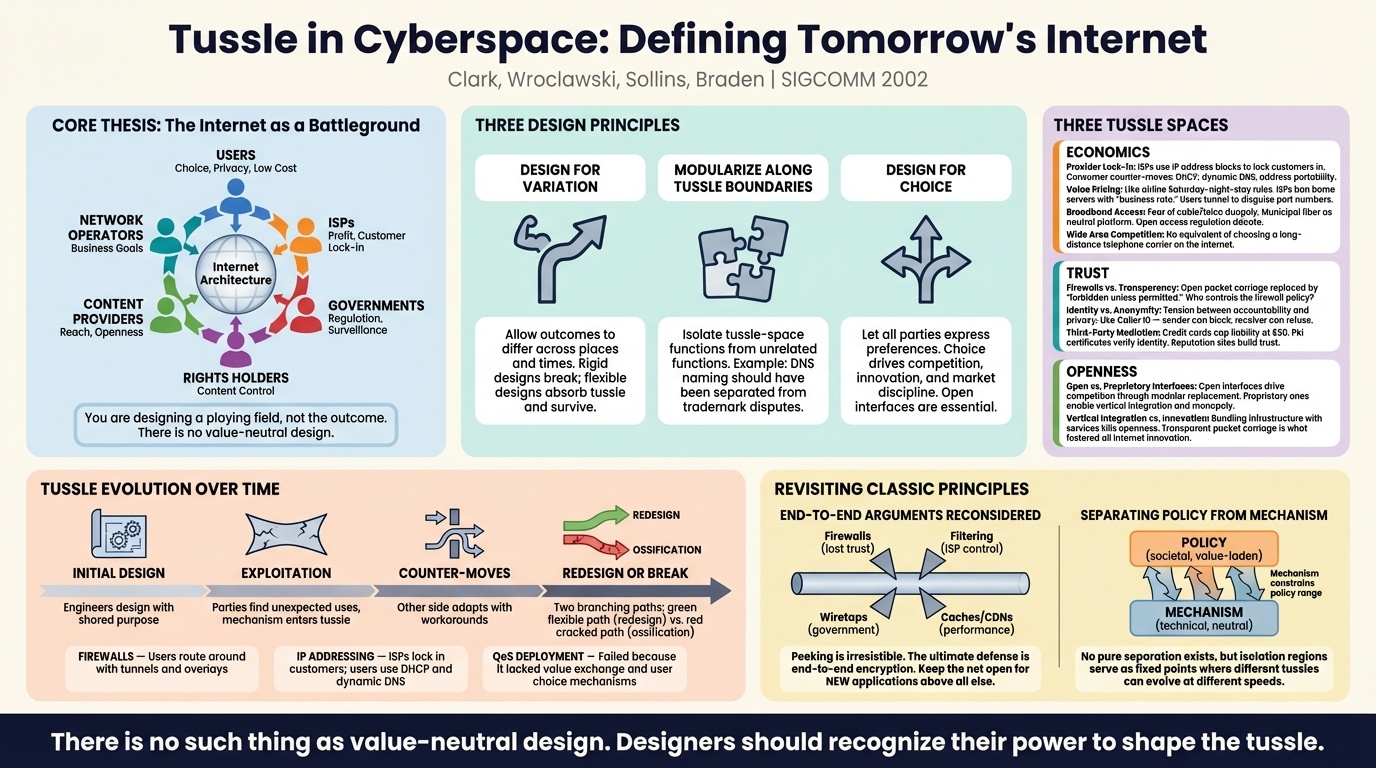

Tussle in Cyberspace

Twenty-one years after the End-to-End paper, the same research group published a follow-up. The tone was completely different. Mazières pointed out that Dave Clark had been the junior author in 1981 and was now the senior author in 2002. The internet had gone from nothing to the dominant communications platform in the world. He described them as being in “Frankenstein mode,” looking at what they’d built and asking what had gone wrong.

The paper’s thesis is that the internet isn’t a technical system. It’s a tussle space where users, ISPs, governments, and content providers all fight for control, and the architecture determines who can win. Mazières distilled the design principle into one line: “Design the playing field, not the outcome.”

That framing hit me in a specific way. In cloud infrastructure, our customers range from two-person startups to Fortune 50 enterprises. The products need to fit all sizes, which means they need to be a wide playing field, not a prescribed set of outcomes. When we get this wrong and build too much opinion into the platform, we end up serving one segment well and making the other miserable.

There is a real tussle in cloud platforms, though it’s not between the platform and the customer. It’s between customers. Enterprise customers bring in the bulk of the revenue and tend to get prioritized in capacity allocation. The startups and smaller shops sometimes don’t get the compute they need. The playing field isn’t level, and that’s largely because the business reality is about maximizing long-term revenue, which mostly comes from enterprise contracts. It’s an observation, not a complaint. But reading this paper made me think about it differently. The architecture of how we allocate capacity is a choice about who wins, whether we frame it that way or not.

Mazières also used ad blockers as an example of how tussles evolve over time: someone builds a blocker, then websites detect the blocker, then the blocker adapts, and so on. It’s a multi-round process where nobody ever fully wins. The paper argues that the right approach is to design systems that allow these tussles to play out rather than trying to resolve them in the architecture itself.

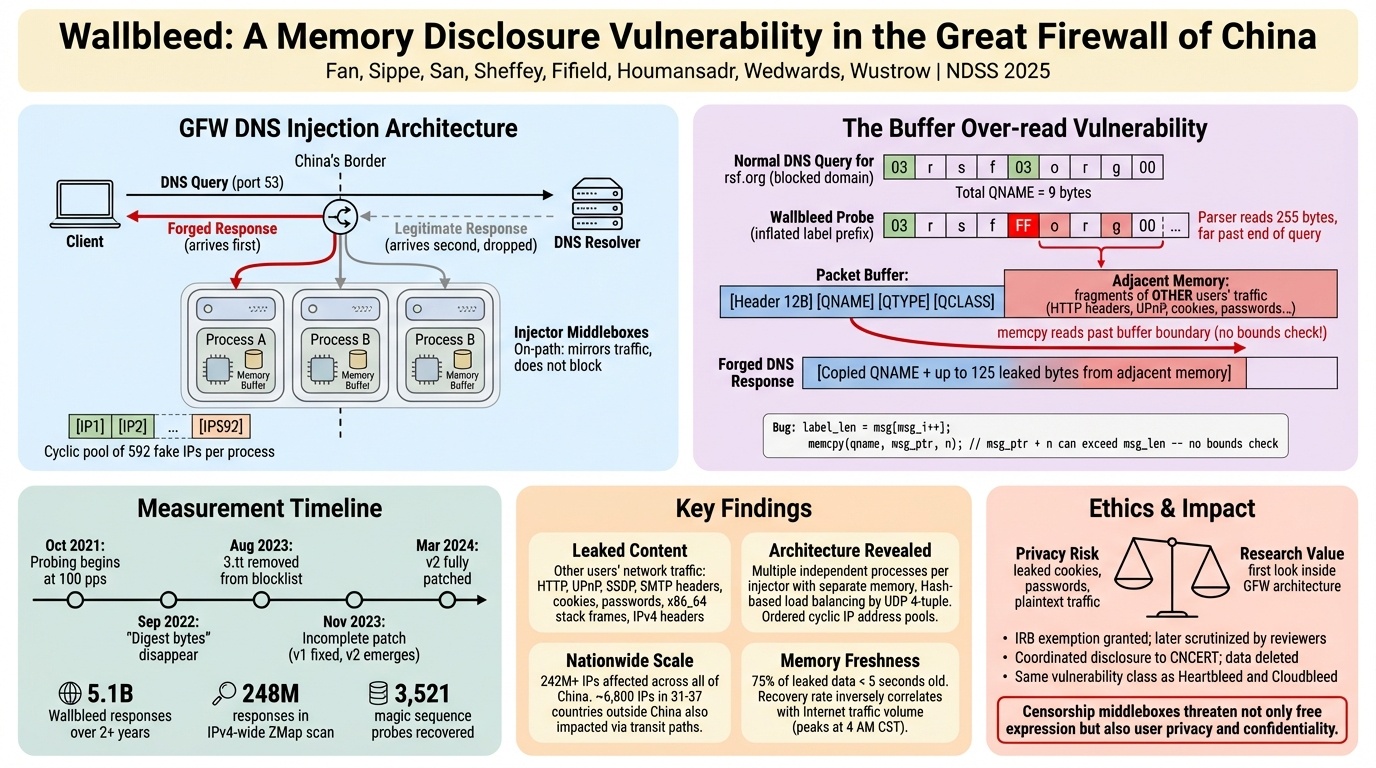

Wallbleed

The third paper was different. Wallbleed is about China’s Great Firewall. Researchers reverse-engineered how it works and found a buffer-overflow vulnerability in the censorship infrastructure itself, ironically the same class of bug that powered the 1988 Morris Worm.

Winstein didn’t open with the technical findings. He started with ethics. He said something like, Stanford probably wants you to think that technical decisions and ethical decisions are often linked. Most of the choices we make as computer scientists don’t have an ethical dimension. Should a button be blue or turquoise? But it’s easy to miss the ones that do.

He traced a line from Vannevar Bush’s 1945 “As We May Think” through Barlow’s “Declaration of the Independence of Cyberspace” to the founding ethos of Silicon Valley. The idea was that information would be digitized, accessible to all, and would lead to a flowering of liberty. The Digital Library Project got renamed. It became Google.

Against that backdrop, the Wallbleed paper is uncomfortable. The internet, designed so no one could discriminate, is being used as a censorship apparatus. And the researchers studying it face real risk. Winstein noted that the system changed over the years they were analyzing it, there’s no way to reproducibly run it, and “if you do too much of it, people will come to your house and abduct you.”

The anecdote that stuck with me was about Kolmogorov. The Soviet mathematician read Shannon’s information theory in Russian translation, but the translators had censored a key chapter for being “contrary to Marxist principles.” Kolmogorov independently rediscovered the same result. Censorship doesn’t just hide information. It wastes talent.

I’ll be honest about my own reaction to this paper. It’s important work, and the technical approach of probing the firewall’s memory buffer is genuinely interesting. But the paper was written entirely by American researchers without any Chinese co-authors, studying a Chinese system from the outside. It’s hard not to read some of it as sanctimonious. The ethics of censorship are real and serious, but framing them purely from an American perspective flattens the complexity. Our own government seizes domain names and censors content when it wants to. Mazières made this point himself, noting that “if you set up totally-official-birkin-bags.com, our government would censor you pretty quickly.”

The connection to the first two papers is straightforward. The end-to-end argument says don’t build intelligence into the network. The Great Firewall is what happens when someone builds intelligence into the network for the explicit purpose of controlling what flows through it. The architecture that was supposed to make discrimination impossible turned out to be very much possible to discriminate on.

Part 1 of 4 in “Papers Your Senior Engineers Should Have Read” from CS244C at Stanford, Winter 2026. Part 2 | Part 3 | Part 4